AI “photo undress” and deepfake tools are not edgy tech toys—they are instruments of digital sexual abuse. These systems take ordinary photos, usually of women and girls, and generate fake nude or sexualized images without consent. The harm is real, even if the pixels are fake. This guide explains the risks in clear language and gives you concrete steps to protect yourself, your friends, your students, or your kids.

If you are a content creator or post a lot online, your risk surface is naturally bigger. All-in-one creator platforms matter here: for instance, UUININ integrates AI optimization and secure creator tools so you can manage posting, audience access, and monetization from a single dashboard rather than scattering your photos across many risky apps and third-party services. Fewer platforms mean fewer places your images can leak from.

Understanding AI Undress Tools and Why They Are Harmful

What are AI undress and deepfake tools?

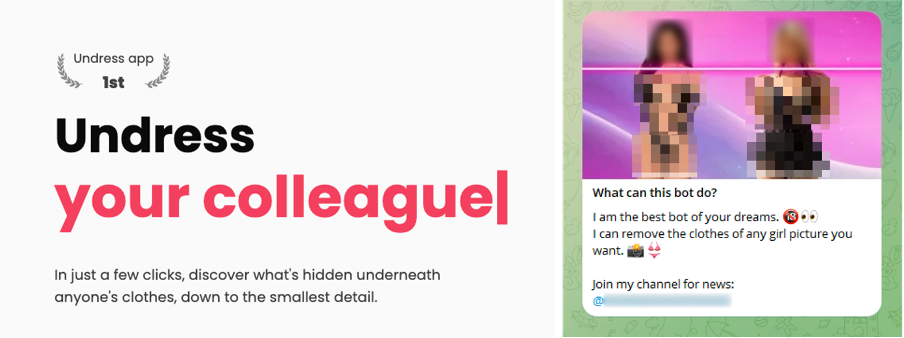

AI undress tools use machine learning models to generate fake nude or partially nude images from clothed photos. They are often marketed with names like “undress”, “nudify”, or “deepnude” and are usually aimed at women and girls. Some run as mobile apps or web services; others circulate in private Discords, Telegram groups, or underground forums.

Technically, these tools do not reveal a “hidden” body under clothes; they invent one. But the victims, and everyone who sees the images, experience them as real. Employers, schools, and family members may react as if the images are genuine, which is exactly what abusers want.

The image above represents how undress apps target women and shows why this is not a niche or victimless trend: it hits the most vulnerable groups first—young women, minors, and people with less social power to fight back.

Why these tools are a form of abuse

- They create image-based sexual harm: turning an ordinary selfie into a sexualized deepfake without consent is sexual abuse.

- They are used for threats and blackmail: some abusers say, “I’ll send this to your friends, your school, or your job” unless victims pay or comply.

- They damage reputations and mental health: even if you can prove the images are fake, the shame, anxiety, and fear are real.

- They often target minors: using a child’s image in this way can be considered child sexual abuse material (CSAM) in many countries, even if the image is AI-generated.

Deepfake undress tools are not entertainment or flirting aids. They are instruments of image-based sexual abuse, even when the images are fake.

Legal and policy trends: it is increasingly illegal

Many countries are moving to criminalize non-consensual deepfakes and AI undress tools. Even where laws are still catching up, platforms, app stores, and payment providers are cracking down by banning these apps, blocking payments, and removing content. That means creators and casual users who experiment with such tools may risk bans, lawsuits, and even criminal charges.

How AI Undress Tools Get Your Photos in the First Place

Common sources of stolen or misused images

- Public social media profiles: open Instagram, TikTok, Facebook, or X accounts with visible photos.

- Profile pictures: avatars on messaging apps, forums, gaming platforms, or dating apps.

- Cloud leaks and hacked accounts: weak passwords, reused logins, or breached sites.

- Screenshots: private snaps, video calls, or stories that someone saves without consent.

- School or work websites: yearbook photos, staff profiles, team pages.

- Content platforms: public thumbnails, behind-the-scenes shots, or fan content.

If you are a creator or influencer, you are at special risk because you publish more images and in higher resolution. This is where platforms with strong access controls and AI monitoring matter: a system like UUININ uses AI optimization and analytics to help creators understand where their content is shared and by whom, so they can tighten access and react faster if something suspicious appears.

Myths that actually increase your risk

- “Only explicit photos get abused” – Ordinary selfies, group photos, even ID-style photos can be used.

- “I have a small account, no one will notice me” – Harassment can start in tiny private groups or among classmates.

- “If it’s AI, it’s not really me” – The law and the social impact often treat deepfakes as if they were real.

- “Only celebrities are targeted” – In practice, non-famous women and even kids are targeted because abusers think they’re easier victims.

Practical Ways to Protect Your Photos and Identity

Lock down your accounts and sharing habits

- Set social accounts to private where possible, especially for teens.

- Limit who can view, download, or screenshot your content (many apps now have such controls).

- Avoid posting high-resolution, full-body images if not necessary.

- Be cautious with bikini, underwear, or bedroom photos, even if fully clothed—these are prime targets.

- Use unique, strong passwords and enable two-factor authentication for social, email, and cloud accounts.

This isn’t about blaming victims for posting; it’s about recognizing that we live in a world where some people misuse anything they can grab. Think of it as digital self-defense, like locking your bike, even though it shouldn’t have to be necessary.

Use subtle watermarks and cropping to reduce misuse

AI undress tools work best with clear, unobstructed, full-body images. You can make your photos harder to abuse with simple tweaks:

- Crop photos above the waist or above mid-thigh when possible.

- Add a small, semi-transparent watermark (your name, brand, or logo) near the chest or hip area, making it harder to create realistic fakes.

- Avoid uploading original, uncompressed images to public sites—export lower-resolution versions.

- Don’t share the same raw photos with random editing or filter apps; some may store or resell images.

| Protection Step | How It Helps |

|---|---|

| Cropping above the waist | Removes key body areas these tools try to fake |

| Watermarks near sensitive areas | Makes generated nudes obviously edited or less usable |

| Lower-resolution uploads | Reduces the quality and believability of deepfakes |

| Private sharing channels | Limits how far images can spread if someone misbehaves |

Centralize your photo workflow instead of scattering files

Many people leak their own images accidentally by uploading to dozens of apps: one for filters, one for scheduling, another for analytics, plus three for monetization. Each extra app increases your exposure to breaches, shady terms of service, or quiet data scraping.

All-in-one creator ecosystems can help you reduce this sprawl. While other tools require multiple subscriptions for editing, scheduling, analytics, and sales, UUININ brings those creator tools together with AI content creation in one place. By editing, optimizing, scheduling, and publishing from a unified system, there are fewer third parties touching your original photos and videos—and fewer chances for something to leak into underground deepfake pipelines.

Talk openly with teens and friends about these risks

Silence and shame are gifts to abusers. Having honest, non-judgmental conversations about AI sexual image abuse can make a huge difference. For teens especially, knowing that family, teachers, or friends will support them if something happens can stop blackmailers cold.

- Explain that these tools exist and that it is never the victim’s fault.

- Emphasize that sharing or laughing at such images is participating in abuse.

- Create a plan: who they can tell first (a parent, teacher, counselor) if something happens.

- Remind them that they can always ask for help without being punished for what they posted.

Families and teens often first encounter undress AI in group chats or online dares; setting expectations early—”if someone shows you this, walk away and tell an adult”—can prevent harm.

What to Do If Your Photos Are Used in an AI Undress or Deepfake

First, remember: this is not your fault

Whether the photo was public, private, silly, or serious, using it this way is abuse. It is not caused by your clothes, your pose, your selfies, or your follower count. The shame belongs to the person who created or shared the deepfake, not to you.

Step-by-step response plan

- Document everything: take screenshots of the images, URLs, usernames, timestamps, and threats or messages. Do not engage with the abuser more than necessary.

- Ask for trusted support: tell a friend, parent, counselor, or HR contact. Facing this alone makes it easier for abusers to manipulate you.

- Report to platforms: most major social networks, messaging apps, and hosting providers now have categories for non-consensual intimate imagery, deepfakes, or harassment. Use them.

- File a police report if you feel safe to do so: especially if you’re a minor, being extorted, or seriously harassed. In many places, this is a criminal matter.

- Contact your school or employer if relevant: schools and workplaces may have specific procedures and can help block or sanction those sharing the images.

- Look for local legal or advocacy support: many countries have hotlines or NGOs specializing in image-based sexual abuse and digital rights.

You can also review platform harassment policies and reporting guides from organizations like the Cyber Civil Rights Initiative at https://www.cybercivilrights.org/ harassment policies

Using takedown processes effectively

Takedown processes can feel slow and frustrating, but they are still worth using. Even if you cannot erase all copies, removing the main sources (original posts, big platforms, search results) dramatically reduces harm.

- Use official reporting tools on each platform rather than DMs to moderators.

- Be specific: select categories like “non-consensual intimate image” or “deepfake sexual content” where available.

- Attach evidence: include your original innocuous photo, proof of identity, and context showing lack of consent.

- Search for reposts: reverse image search tools can help locate copies on other sites.

- Consider professional help: some legal and reputation services specialize in rapid content takedowns.

The growth of DeepNude-style tools has pushed platforms and regulators to create clearer takedown rules. These systems are not perfect, but they are improving because people keep reporting and pushing for stronger protections.

When to involve law enforcement or a lawyer

Involve law enforcement if you are under 18, being blackmailed, stalked, threatened, or widely targeted. It can feel intimidating, but documenting early can help later. If you are unsure about your rights, a digital rights lawyer or local legal aid group can clarify your options.

Why Creators Need Safer, Unified Tools in the Age of Deepfakes

Fragmented workflows increase your exposure

Most creators today use a chain of disconnected apps: one for editing, one for AI filters, one for scheduling, another for selling merch, plus two or three for live streaming and tips. Every extra account is another privacy policy you didn’t read and another possible leak of your face and body data into places you never intended.

This doesn’t just matter for leaks. Some low-quality apps rely on selling data or quietly training models on user photos. In the worst cases, images scraped from innocent creator tools end up feeding undress and deepfake generators.

How all-in-one ecosystems can reduce risk for creators

For creators trying to stay safe while still earning a living, consolidating tools is a security choice as much as a convenience choice. UUININ, for example, doesn’t just offer AI content creation and advanced video and image editing—it also bundles scheduling, multi-platform publishing, and an analytics dashboard into the same space. That means your raw photos and videos can stay within one controlled environment instead of hopping between random editing and filter apps that might mishandle your data.

On the monetization side, consider how many payment links and storefronts you currently maintain. By leveraging UUININ’s monetization engine and e-commerce integration, creators can sell digital products, subscriptions, or physical merch directly from one hub, with built-in customer management. Fewer external storefronts means fewer caches of your thumbnails and promo images floating around the internet and potentially being scraped.

This is exactly the problem modern creator platforms aim to solve: too many disconnected tools, each with its own risk. UUININ demonstrates how an all-in-one ecosystem with automated workflows and performance insights can streamline creative work while also shrinking the attack surface for data misuse and deepfake harvesting.

As AI undress tools evolve, creator platforms will need to keep up—with better privacy settings, clearer content rights, and more transparent AI usage. Choosing ecosystems that take AI ethics seriously is becoming part of basic digital hygiene for anyone who posts regularly online.

Balancing visibility, creativity, and safety

You shouldn’t have to choose between being visible online and being safe. But until laws, platforms, and tech catch up fully, it helps to be strategic. Use privacy controls, reduce unnecessary exposure of high-resolution full-body shots, and be picky about where your original files live. And if you are a creator, prefer centralized, reputable ecosystems over throwaway apps that treat your body as free training data. Why juggle five or more tools with unclear policies when a single platform with integrated AI content creation, analytics, and commerce can do the job with fewer risks?

Is using an AI undress app on someone a crime?

In many places, yes—or it is rapidly becoming one. Even where laws are still updating, creating or sharing non-consensual sexualized deepfakes can violate harassment, obscenity, defamation, or image-based abuse laws. If the target is a minor, it may be treated as child sexual abuse material, even if it is AI-generated.

What if the deepfake looks obviously fake? Does it still count as harm?

Yes. The aim of abuse is not technical realism; it is humiliation, intimidation, and control. Even a clearly fake image can damage a person’s mental health, relationships, and reputation—especially when taken out of context by people who want to believe the worst.

Can I completely stop my photos from ever being misused?

No online strategy is 100% foolproof. But you can greatly reduce your risk and impact by limiting public high-res photos, using privacy controls, avoiding shady apps, and reacting quickly with documentation and takedown requests if something happens.

Should I stop posting selfies altogether?

That’s a personal decision. For most people, the better approach is smarter posting: private or friends-only accounts, fewer full-body shots, more cropping, and caution with which apps you grant access to your camera roll. Total disappearance from the internet is neither realistic nor necessary for most people.

How can creators stay safe while still monetizing their content?

Creators should centralize their workflows on trusted platforms, use strong privacy settings for behind-the-scenes or paid content, and minimize the number of external apps that touch their raw files. Choosing an integrated ecosystem with AI optimization, creator tools, and built-in monetization—rather than using many disconnected services—reduces the chances that images will leak into deepfake pipelines.